In practice, AI systems reward better-formatted content over stronger findings. One of our clients invested decades building a research library of over 100 published studies, including partnerships with major research universities and more than 90 peer-reviewed publications. Their competitor has less rigorous scientific research but formats each study as a standalone web page with clear sections: what was studied, what was found and why it matters. The competitor gets cited by AI platforms. Our client doesn't.

Across our hundreds of clients at RankScience, as an SEO and AI search optimization agency, we consistently see this pattern. Whether it's SaaS product benchmarks, proprietary industry surveys, peer-reviewed studies, clinical data or competitive analyses, companies invest years producing original research, case studies and proprietary data, then publish it in formats AI can't extract from. We built our AI visibility framework around solving these structural problems for clients. These are the six publishing mistakes we see most often.

AI platforms attribute research findings to the domain where they discover them, not to the company that produced the research. When research lives on PubMed, third-party journal websites, GitHub repositories, Medium, industry publication sites or CDN-hosted conference PDFs, AI systems cite those platforms as the source.

Publishing on established platforms can reinforce domain authority through backlinks, but AI platforms attribute the citation itself to whichever domain hosts the extractable content. The research-producing company helps to build the platform's citation profile, not its own.

Getting research onto your own domain solves the attribution problem, but it doesn't solve the extraction problem. The content also has to be structured so AI can pull specific claims from it.

Dense technical content without section breaks prevents AI from extracting any single citable claim. SaaS companies and B2B organizations frequently publish long technical deep-dives with no heading structure, no self-contained findings and key results buried deep in the content. Think 3,000-word posts where the only sentence that actually states the outcome sits in paragraph 14. AI systems cannot isolate a single extractable claim from a wall of unstructured text, regardless of how strong the underlying research is.

PDF-based research publishing is structurally incompatible with how AI platforms discover and cite content. Google's own documentation treats PDFs as indexable but structurally limited file types, and federal SEO guidance for PDF documents confirms they lack semantic HTML, rich metadata and schema support. This is why SEO for whitepapers is fundamentally harder than SEO for web pages: PDFs cannot carry structured data or schema markup and lack the title tags, heading hierarchy and metadata capabilities of HTML pages.

Research archive pages create a separate AI visibility problem. A page listing 142 citation strings with outbound links contains zero extractable claims for AI to cite. IDC, a technology market research firm, and Gartner, a technology research and advisory company, estimate that 80 to 90% of enterprise data remains unstructured.

The Content Marketing Institute (CMI), a content marketing research organization, found in its 2025 survey that 55% of enterprise marketers produce whitepapers and ebooks while 40% produce research reports, much of it still locked in PDF format.

This is the knockout punch when combined with PDFs: even if the PDF were optimized, AI crawlers can't reach gated content at all. NetLine, a B2B content syndication platform, reported in its 2025 State of B2B Content Consumption report that gated content demand has grown more than 80% since 2020, while the "Consumption Gap" (the time between requesting and actually opening content) widened from 31 hours to 39 hours between 2023 and 2024. Companies gate research behind download forms, buyers wait nearly two days to open it and AI platforms can't access it at all.

Research pages that lead with methodology, literature review or background context instead of findings fail AI's extraction logic. Most research teams are trained to write like journal authors. Online, that same habit quietly kills AI visibility.

AI platforms scan opening sentences for extractable claims. Our analysis of how AI extraction favors page beginnings and endings shows why methodology-first formatting puts your best findings in the lowest-extraction zone. Pages that follow academic convention (background, literature review, methodology, then findings) bury citable content below hundreds of words AI may never reach.

Case studies written as linear narratives where the outcome appears last also fail AI's extraction logic. AI needs the finding stated first, then the supporting evidence.

Most research pages either lack schema markup entirely or use generic Article tags that tell AI nothing specific about the content.

For research pages, the equivalent means populating schema with specific methodologies, sample sizes, key findings and author credentials rather than defaulting to bare Article markup.

Once you know what's breaking visibility, the next question is what actually predicts which pages AI chooses to cite.

Publishing format determines whether AI systems can extract and cite research findings. Multiple independent studies have measured exactly which content qualities predict AI citation.

Clarity, structural formatting and E-E-A-T signals predict AI citation more strongly than content depth or research rigor. Semrush, an SEO analytics platform, analyzed 304,805 URLs cited by LLMs and 921,614 Google-ranking URLs across 11,882 prompts. The content qualities that most strongly correlate with AI citation are:

Source: Semrush analysis of 304,805 URLs cited by LLMs across 11,882 prompts. Full study on content optimization for AI search.

This formatting advantage isn't limited to high-authority domains. RankScience's analysis of why content written for human readers underperforms in AI search shows how structural optimization creates opportunities even for lower-ranked sites.

Structural optimization can overcome ranking disadvantages in AI citation. The Princeton University Generative Engine Optimization (GEO) study (KDD 2024), testing 10,000 queries across 9 datasets, found that well-structured content can outperform higher-ranked pages inside AI answers. Their "Cite Sources" strategy increased visibility inside AI-generated answers by 115% for pages in striking distance of the top results, while some #1-ranked pages saw visibility drop by about 30% (because generative engines evaluate content directly rather than trusting rank position as a proxy for quality).

The point isn't that any specific ranking position is special. Generative engines evaluate content directly rather than relying on signals like backlinks and domain authority, giving structurally optimized pages an outsized advantage over higher-ranked competitors that aren't formatted for extraction.

Backlinks remain a strong indirect predictor of AI citation through organic rankings, but once AI decides which page to actually quote, backlinks don't help. Profound, a search analytics firm, found that brand search volume is the strongest direct predictor of AI citations. Once your content ranks well enough through authority signals to be considered, structure determines whether you get cited.

So the job isn't to write better research, but instead to package the research into extractable units AI can quote.

Companies already getting cited by AI platforms share a common content repurposing strategy that any company with original research can learn from. The approach comes down to four changes:

These aren't theoretical recommendations. The companies consistently earning AI citations already publish this way.

Publishing research as both structured HTML and downloadable PDF already works at scale in major consulting firms. McKinsey, the global management consulting firm, is one of the strongest examples of this content repurposing strategy in practice. It publishes full-text HTML versions of its research on its website with interactive elements, chapter navigation, detailed methodology sections and key findings surfaced early in each article. PDFs exist for offline use, but the HTML version is the primary publishing format.

Deloitte Insights, the research publishing arm of Deloitte, a global professional services firm, operates on the same principle. Its design system explicitly prioritizes titles that summarize the key takeaway so audiences can quickly grasp the main point. This principle translates directly to AI-friendly research pages where each section leads with its conclusion.

Think tanks including Chatham House, the Stockholm Environment Institute and the NHS Confederation are investing in CMS-based publishing that generates both interactive HTML and downloadable PDFs from the same source. One firm describes this approach as making research more searchable and easier to use with AI applications.

AI systems extract individual sections as independent citable units, not entire documents as a whole.

Wikipedia's structural pattern of neutral tone, hierarchical headings, heavy citations and self-contained sections succeeds for this reason. Wikimedia's own research on LLMs confirms that Wikipedia is among the most heavily used LLM training sources, with researchers broadly agreeing its content is likely given greater weight because of its open license and perceived reliability. Structuring research pages to mirror Wikipedia's format is effectively copying the architecture AI systems already trust most.

Individual page optimization matters, but a content strategy for AI visibility requires publishing across multiple AI platforms simultaneously, not just optimizing for Google. The window for establishing citation authority is narrowing.

Each major AI platform selects sources differently. Profound, a search analytics firm, found that ChatGPT's web-search responses match 87% of citations to Bing's top 10 results, Perplexity over-indexes Reddit at 46.7% of top-10 citation share and Google AI Overviews correlate most strongly with traditional organic rankings at 93.67%. Only 11% of domains are cited by both ChatGPT and Perplexity.

Google's own guidance on AI Overviews emphasizes that sources must be helpful, reliable and provide enough context to answer the query. In practice, that functions as a "sufficient context" filter: research pages that present findings as incomplete fragments fail this filter regardless of how rigorous the underlying research is.

The window is closing because competitors are already optimizing their research content for AI extraction. Originality.ai, an AI content detection platform, found that 10.4% of AI Overview citations are AI-generated content optimized for extractability. Semrush's analysis of the most-cited domains in AI shows that small cluster of domains captures most AI citations, and 65% of AI bot activity targets content published within the past year.

Original research published years ago and never reformatted is increasingly invisible. Repackaging with updated structure and a current publication date puts that research back in front of AI platforms without producing a single new finding.

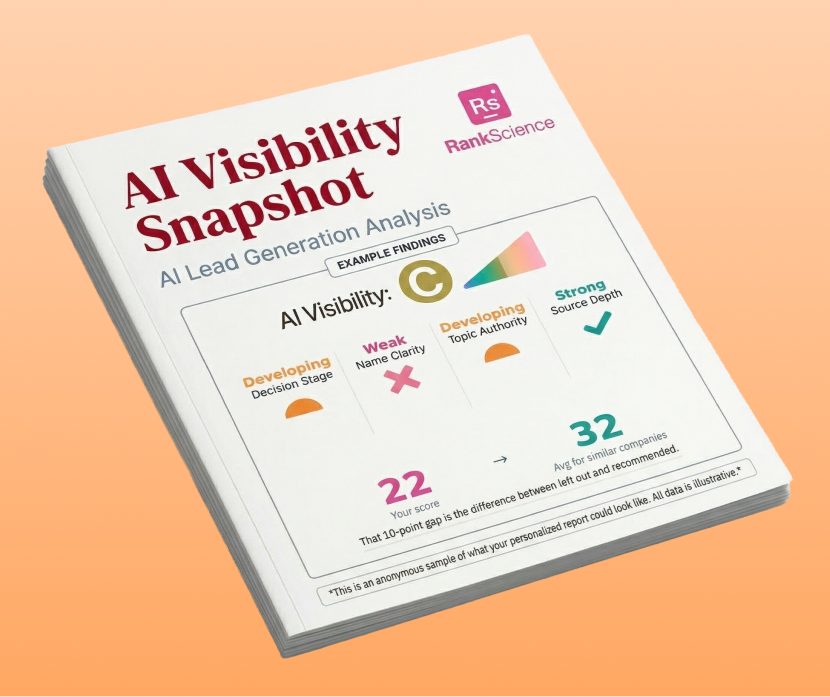

The free RankScience AI Visibility Snapshot shows exactly where you stand across major AI platforms like ChatGPT and Google AI Overviews.

Get Your Free AI Audit

RankScience LLC

2443 Fillmore St #380-1937,

San Francisco, CA 94115

© 2026 RankScience, All Rights Reserved